Integrate via Terraform

Installation with Terraform

Prerequisites

DATADOME_SERVER_SIDE_KEYavailable in your DataDome dashboard,DATADOME_CLIENT_SIDE_KEYavailable in your DataDome dashboard,- Up-to-date Terraform,

CLOUDFLARE_API_TOKENfor Workers script and routes edits following this guide,CLOUDFLARE_ZONE_IDavailable in your Domain Overview page in Cloudflare,CLOUDFLARE_ACCOUNT_IDavailable in your Domain Overview page in Cloudflare,CLOUDFLARE_PATTERNmapping a route to apply the worker.

Protect your traffic

- Download the latest version of our Cloudflare Worker script.

- Create an empty terraform file, for example

datadome_worker.tfand paste the following code:

terraform {

required_providers {

cloudflare = {

source = "cloudflare/cloudflare"

version = "~> 5"

}

}

}

variable "cloudflare_api_token" {}

variable "cloudflare_zone_id" {}

variable "cloudflare_account_id" {}

variable "cloudflare_pattern" {}

variable "datadome_server_side_key" {}

variable "datadome_client_side_key" {}

provider "cloudflare" {

api_token = var.cloudflare_api_token

}

resource "cloudflare_worker" "datadome_worker" {

account_id = var.cloudflare_account_id

name = "datadome_worker"

}

resource "cloudflare_workers_route" "catch_all_route" {

zone_id = var.cloudflare_zone_id

pattern = var.cloudflare_pattern

script = cloudflare_worker.datadome_worker.name

depends_on = [cloudflare_workers_deployment.datadome_worker_deployment]

}

resource "cloudflare_worker_version" "datadome_worker_version" {

account_id = var.cloudflare_account_id

worker_id = cloudflare_worker.datadome_worker.id

compatibility_date = "2025-04-14"

main_module = "datadome.js"

modules = [{

content_file = "dist/datadome.js"

content_type = "application/javascript+module"

name = "datadome.js"

}]

bindings = [

{

name = "DATADOME_SERVER_SIDE_KEY"

text = var.datadome_server_side_key

type = "secret_text"

},

{

name = "DATADOME_CLIENT_SIDE_KEY"

text = var.datadome_client_side_key

type = "secret_text"

}

]

}

resource "cloudflare_workers_deployment" "datadome_worker_deployment" {

account_id = var.cloudflare_account_id

script_name = cloudflare_worker.datadome_worker.name

strategy = "percentage"

versions = [{

percentage = 100

version_id = cloudflare_worker_version.datadome_worker_version.id

}]

}

terraform {

required_providers {

cloudflare = {

source = "cloudflare/cloudflare"

version = "~> 4"

}

}

}

variable "cloudflare_api_token" {}

variable "cloudflare_zone_id" {}

variable "cloudflare_account_id" {}

variable "cloudflare_pattern" {}

variable "datadome_server_side_key" {}

variable "datadome_client_side_key" {}

provider "cloudflare" {

api_token = var.cloudflare_api_token

}

resource "cloudflare_worker_route" "catch_all_route" {

zone_id = var.cloudflare_zone_id

pattern = var.cloudflare_pattern

script_name = cloudflare_worker_script.datadome_worker.name

}

resource "cloudflare_worker_script" "datadome_worker" {

account_id = var.cloudflare_account_id

name = "datadome_worker"

content = file("${path.module}/dist/datadome.js")

module = true

secret_text_binding {

name = "DATADOME_SERVER_SIDE_KEY"

text = var.datadome_server_side_key

}

secret_text_binding {

name = "DATADOME_CLIENT_SIDE_KEY"

text = var.datadome_client_side_key

}

}

bindings = [

{

name = "DATADOME_SERVER_SIDE_KEY"

text = var.datadome_server_side_key

type = "secret_text"

},

{

name = "DATADOME_CLIENT_SIDE_KEY"

text = var.datadome_client_side_key

type = "secret_text"

}

]

}

resource "cloudflare_workers_deployment" "datadome_worker_deployment" {

account_id = var.cloudflare_account_id

script_name = cloudflare_worker.datadome_worker.name

strategy = "percentage"

versions = [{

percentage = 100

version_id = cloudflare_worker_version.datadome_worker_version.id

}]

}

- Export the mentioned variables (DataDome keys are available in your dashboard):

export TF_VAR_datadome_server_side_key=${DATADOME_SERVER_SIDE_KEY}

export TF_VAR_datadome_client_side_key=${DATADOME_CLIENT_SIDE_KEY}

export TF_VAR_cloudflare_api_token=${CLOUDFLARE_API_TOKEN}

export TF_VAR_cloudflare_zone_id=${CLOUDFLARE_ZONE_ID}

export TF_VAR_cloudflare_account_id=${CLOUDFLARE_ACCOUNT_ID}

export TF_VAR_cloudflare_pattern=${CLOUDFLARE_PATTERN}- Run

terraform init

terraform plan

# Two ressources will be created: the Worker script and the Worker route.

terraform applyCongrats! You can now see your traffic in your DataDome dashboard.

Configuration

The configuration is done inside the script, using constants.

Server-side settings

| Setting name in Worker's code | Environment variable type | Description | Required | Default value | Example |

|---|---|---|---|---|---|

| DATADOME_SERVER_SIDE_KEY | Secret | Your DataDome server-side key, found in your dashboard. | Yes | - | - |

| DATADOME_TIMEOUT | Text | Request timeout to DataDome API, in milliseconds. | No | 300 | 350 |

| DATADOME_URL_REGEX_EXCLUSION | Text | Regular expression to exclude URLs from the DataDome analysis. | No | List of excluded static assets below | - |

| DATADOME_URL_REGEX_INCLUSION | Text | Regular expression to only include URLs in the DataDome analysed traffic. | No | - | /login*/i |

| DATADOME_IP_EXCLUSION | Text | List of IPs or CIDR from which traffic will be excluded from the DataDome analysis. | No | - | ["192.168.0.1", "192.168.0.2", "192.168.0.0/24"] |

| DATADOME_LOGPUSH_CONFIGURATION | Text | List of Enriched headers names to log inside Logpush. | No | - | ["X-DataDome-botname", "X-DataDome-captchapassed", "X-DataDome-isbot"] |

| DATADOME_ENABLE_GRAPHQL_SUPPORT | Text | Extract GraphQL operation name and type on request to a /graphql endpoint to improve protection. | No | false | true |

| DATADOME_ENABLE_REFERRER_RESTORATION | Text | Set to true to restore original referrer when a challenge is passed. | No | false | true |

| DATADOME_ENABLE_DEBUGGING | Text | Log in Workers logs detailed information about the DataDome process. | No | false | true |

| DATADOME_ENABLE_MCP_SUPPORT | Text | Boolean to enable Model Context Protocol support. | No | false | true |

| DATADOME_CUSTOM_FIELD_STRING_1 | Text | Static value or callback function to send a custom string. Refer to Add custom field section. | No | - | |

| DATADOME_CUSTOM_FIELD_STRING_2 | Text | Static value or callback function to send a custom string. Refer to Add custom field section. | No | - | |

| DATADOME_CUSTOM_FIELD_STRING_3 | Text | Static value or callback function to send a custom string. Refer to Add custom field section. | No | - | |

| DATADOME_CUSTOM_FIELD_INTEGER_1 | Text | Static value or callback function to send a custom integer. Refer to Add custom field section. | No | - | |

| DATADOME_CUSTOM_FIELD_INTEGER_2 | Text | Static value or callback function to send a custom integer. Refer to Add custom field section. | No | - | |

| DATADOME_CUSTOM_FIELD_FLOAT_1 | Text | Static value or callback function to send a custom float. Refer to Add custom field section. | No | - | |

| DATADOME_USER_ID | Text | Static value or callback function to send a custom value representing a user ID. Refer to Add custom field section. | No | - | |

| DATADOME_PRODUCT_ID | Text | Static value or callback function to send a custom value representing a product ID. Refer to Add custom field section. | No | - |

/\.(avi|flv|mka|mkv|mov|mp4|mpeg|mpg|mp3|flac|ogg|ogm|opus|wav|webm|webp|bmp|gif|ico|jpeg|jpg|png|svg|svgz|swf|eot|otf|ttf|woff|woff2|css|less|js|map)$/iClient-side settings

| Setting name in Worker's code | Environment variable type | Description | Required | Default value | Example |

|---|---|---|---|---|---|

| DATADOME_CLIENT_SIDE_KEY | Secret | Your DataDome client-side key, found in your dashboard. | Yes | - | - |

| DATADOME_JS_URL | Text | URL of the DataDome JS tag that can be changed to include the tag as a first party. | No | https://js.datadome.co/tags.js | https://ddfoo.com/tags.js |

| DATADOME_JS_ENDPOINT | Text | Endpoint of the DataDome JS Tag. | No | ||

| DATADOME_JS_TAG_OPTIONS | Text | JSON object describing DataDome JS Tag options. | No | { "ajaxListenerPath": true } | { "ajaxListenerPath": "example.com", "allowHtmlContentTypeOnCaptcha": true } |

| DATADOME_JS_URL_REGEX_EXCLUSION | Text | Regular expression to NOT set the DataDome JS Tag on matching URLs. | No | - | - |

| DATADOME_JS_URL_REGEX_INCLUSION | Text | Regular expression to set the DataDome JS Tag on matching URLs. | No | - | /login*/i |

Update with Terraform

- Download the latest version of our Cloudflare Worker script.

- Paste the content of the

datadome.jsfile into the file used for the content of the script of your Worker. - Run

terraform plan1 ressource will be changed.

- Run

terraform applyUninstallation with Terraform

To delete the DataDome Worker and its script, run from the location of your datadome_worker.tf and terraform.tfstate:

terraform destroy -target cloudflare_worker_script.datadome_workerLogging

DatDome custom logging

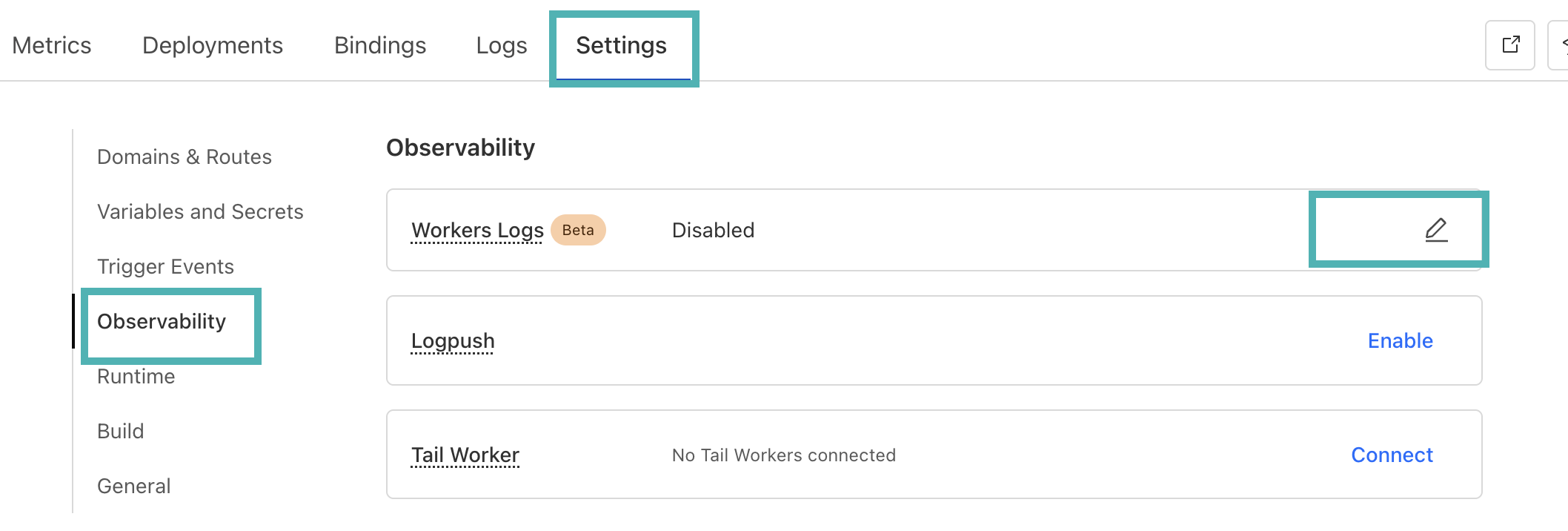

- Inside the Cloudflare Dashboard, go to the DataDome Worker's page.

- Click onSettings, go to the Observability section.

- Click on the pen icon next to Workers Logs.

- Enable logs.

- Click on Deploy.

- You will see the logs inside the Logs tab.

By default, DataDome logs errors only (such as errors in the configuration). If you want to have detailed logs for debugging, you can setDATADOME_ENABLE_DEBUGGINGtotrue.

DataDome logs format

The DataDome custom logs have the following format:

{

"step": "string",

"result": "string",

"reason": "string",

"details": {

"key": "value"

},

"company": "DataDome",

"line": 123

}Logpush

You can use Logpush to send logs to a destination supported by Logpush (Datadog, Splunk, S3 Bucket…).

Cloudflare planLogpush is available to customers on Cloudflare’s Enterprise plan.

Update the Worker’s script

- Fill the

DATADOME_LOGPUSH_CONFIGURATIONvalue with the name of the values you want, as an Array of Strings.

The possible values are available in the Enriched headers page.

Eg:

DATADOME_LOGPUSH_CONFIGURATION = "["X-DataDome-botname", "X-DataDome-isbot", "x-datadomeresponse"]"Enable Logpush

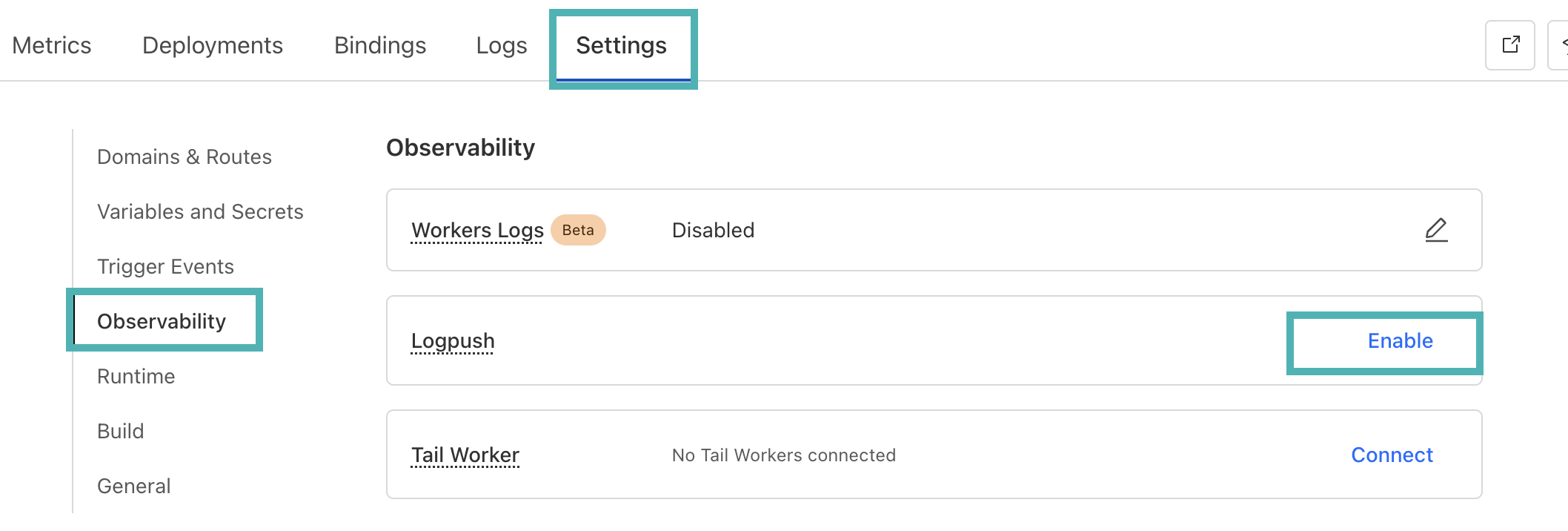

- Inside the Cloudflare Dashboard, go to the DataDome Worker's page.

- Click onSettings, go to the Observability section.

- Click on Enable next to Logpush.

Advanced configuration

Add custom fields

Requires version 2.2.0 of the CloudflareWorker module.

Custom fields featureDataDome let you enrich in real time our detection engine by sending us some custom fields with your business data. These fields can be used for specific detection models.

👋 Please reach out to our support team for reviewing the data received.

Sample a callback function to set custom fields dynamically

The callback is a function receiving a single parameter, the Cloudflare Http request - defined here:

// Sample code for custom fields

// Editing directly the datadome.js file

let DATADOME_CUSTOM_FIELD_STRING_1 = function (request) {

if (request.headers.has('x-user-tier')) {

return request.headers.get('x-user-tier');

} else {

return 'standard';

}

};// Sample code for custom fields

// when calling activateDataDome

const dataDomeHandler = activateDataDome(myHandler, {

serverSideKey: env.DATADOME_SERVER_SIDE_KEY,

clientSideKey: env.DATADOME_CLIENT_SIDE_KEY,

customFieldString1: function (request) {

if (request.headers.has('x-user-tier')) {

return request.headers.get('x-user-tier');

} else {

return 'standard';

}

},

// ...other options

});

Restore the referrer

After passing a DataDome challenge on browsers other than Firefox, the referrer value is updated to the current URL which can lead to inconsistent results in website analytics.

It is possible to restore the Referer header to its original value for your backend:

- Contact our support team, they will review your requirements and provide you with the best recommendations

- Set the boolean value of the

DATADOME_ENABLE_REFERRER_RESTORATIONoption totrue

Enable GraphQL support

It is possible to enable GraphQL support and extract the operation type and operation name from the request body from GraphQL requests.

You need to set the boolean value of the DATADOME_ENABLE_GRAPHQL_SUPPORT option to true

Once enabled, POST requests targeting a graphql endpoint with content-type: application/json will be analyzed to extract GraphQL operation name and type.

Enable MCP support

Starting from version 2.3.0 of the module, it is possible to enable support for the Model Context Protocol and extract information from the request body.

You need to set the boolean value of DATADOME_ENABLE_MCP_SUPPORT option to true.

Once enabled, requests targeting an endpoint with a /mcp route will be analyzed to extract MCP properties according to the official specification:

mcp-session-idandmcp-protocol-versionheadersjsonRpcVersion,jsonRpcRequestId, andmcpMethodrequest body fieldsmcpParamsClientInfoNameandmcpParamsClientInfoVersionrequest body fields when the method isinitializemcpParamsToolNamerequest body field when the method istools/call

Updated 3 months ago